Update

In this update, few bugs have been fixed and the maximum page to parse has been removed (unlimited right now)Features (v1.3.1)

If you have features suggestions, feel free to tell me via “Comments” section

Description

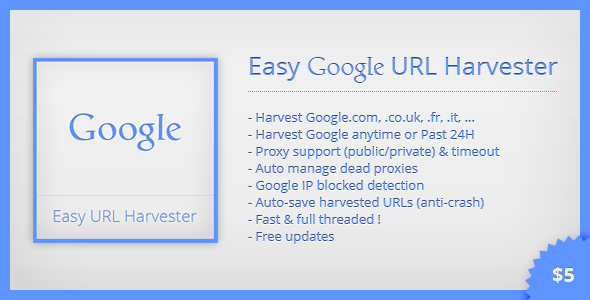

Easy Google URL Harvester is the simpliest tool to harvest Google URLs from keywords list.This tool has been build for SEO purposes, and is perfect to find competitors, related websites to niche, news leads for your business, etc.

You can use public and/or private HTTP proxies to avoid being temporary blocked by Google. If your proxy is dead or too slow, the query will be retried with another proxy until it succeeds. Also, you can use this software without proxy (if you have only few keywords), and the engine will show you a warning message if your IP seems temporary blocked by Google.

A lot of options can help you to get really targeted URLs, for example by choosing the Google extension (.com, .co.uk, .fr, ...), by choosing between anytime or Past 24H results, etc.

Is included a keywords scraper. This tool will use Google suggestions to generate a lot of new keywords from a small list.

Another internal tool is a META tags scraper, to harvest Title, Description and Keywords from a list of URLs.

All harvested URLs are auto-saved in text file at root directory (Raw.txt). So, you can close or kill the process at anytime without lossing already harvested datas (anti-crash system).

You can use all Google operators to get accurate results, like : site ; inurl ; intitle ; cache ; filetype ; info ; link ; related ; OR ; AND ; location ; 1..10 ; * ; + ; – ; ...

You can use a footprint, like a repetitive keyword. Here is an example :If your footprint is “site:.edu” and a keywords list with numbers from 1 to 5, so the final keyword will be :

History

Update 1.3.1

Update 1.3

Update 1.2

Update 1.1

Update 1.0

Support

If you have any questions or features suggestions, feel free to ask via “Comments” section.I’ll try to reply you as fast as possible.

Quick help file included !

Enjoy